- Agent Pulse

- Posts

- Google’s TurboQuant shrinks LLM memory 6x with zero accuracy loss

Google’s TurboQuant shrinks LLM memory 6x with zero accuracy loss

Gemini 3.1 Flash Live API for real-time agent interactions

The Future of AI in Marketing. Your Shortcut to Smarter, Faster Marketing.

Unlock a focused set of AI strategies built to streamline your work and maximize impact. This guide delivers the practical tactics and tools marketers need to start seeing results right away:

7 high-impact AI strategies to accelerate your marketing performance

Practical use cases for content creation, lead gen, and personalization

Expert insights into how top marketers are using AI today

A framework to evaluate and implement AI tools efficiently

Stay ahead of the curve with these top strategies AI helped develop for marketers, built for real-world results.

AIonFire | AArena | Submit Agent | Advertise | SEO Backlink

Welcome back! OP here again, helping you with another addition of Agent Pulse - your go-to spot for agentic news, insights and more.

In today’s:

👉 TOP Agentic News

✨ Featured Agents

🎙️ What to Watch this Week

⚔️ Agent Arena Battleboard

🏆 Agents Leaderboard

🗺️ Agents Landscape Map

Google introduced Gemini 3.1 Flash Live via the Gemini Live API in Google AI Studio, enabling developers to build real-time, streaming AI experiences with low latency. The model is designed for continuous interaction—supporting voice, text, and multimodal inputs—making it well-suited for agents that need to respond instantly rather than in discrete turns. Unlike traditional request/response APIs, the Live API allows persistent sessions, where agents can maintain context and react dynamically as new input arrives. Strategically, this pushes Gemini further into agent infrastructure territory, positioning it as a foundation for interactive, always-on agents across use cases like copilots, customer support, and real-time assistants.

“Every business, just like they have a website, and a phone number, and an email address, is also going to have an AI.”

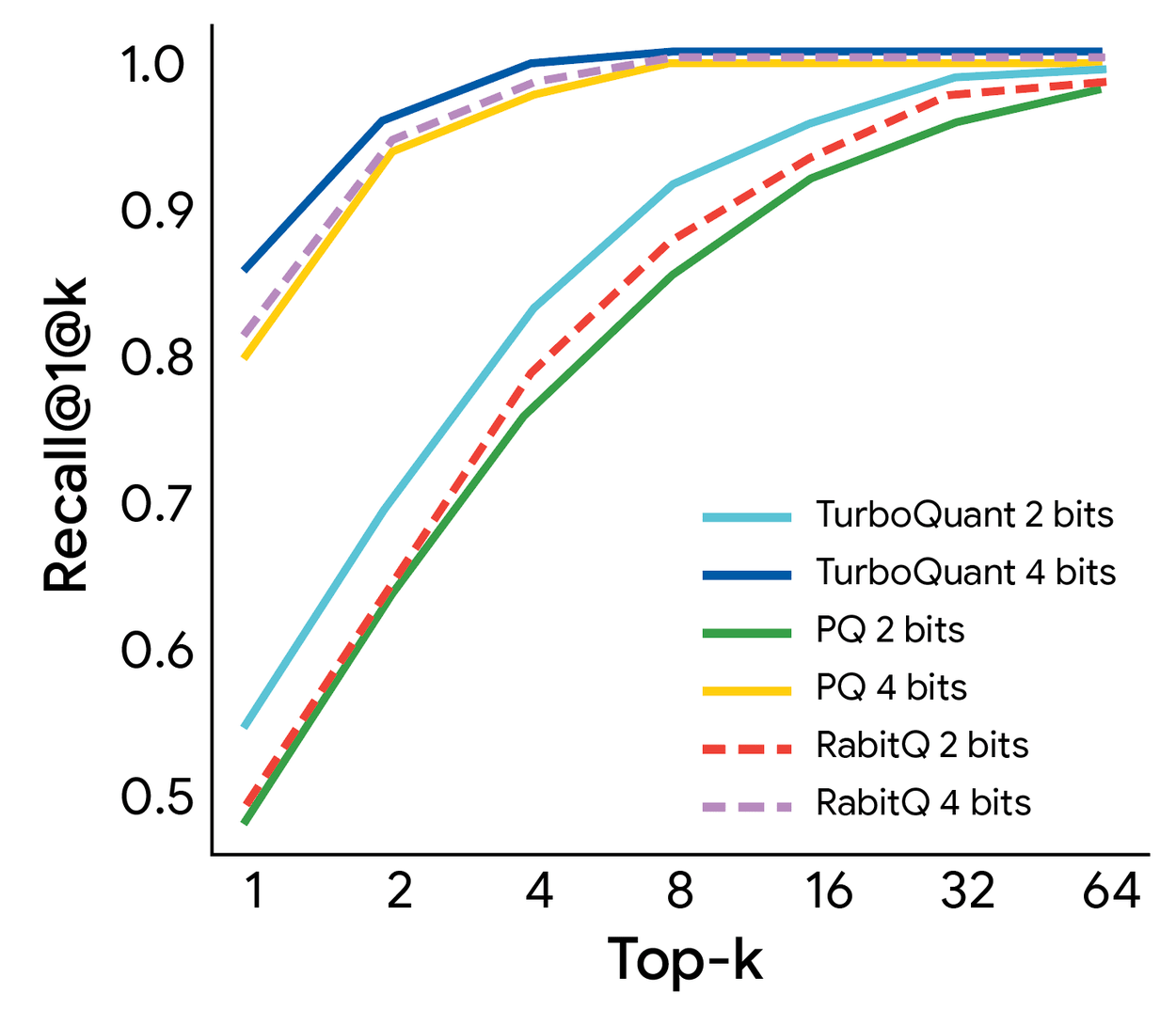

Google Research introduced TurboQuant, a new compression method targeting the key-value (KV) cache—the core memory bottleneck in large language model inference. The system reduces KV cache size by at least 6x and delivers up to 8x speed improvements, while maintaining zero accuracy loss across benchmarks.

Technically, TurboQuant combines two innovations: PolarQuant, which simplifies vector geometry for high-quality compression, and Quantized Johnson-Lindenstrauss (QJL), a 1-bit error correction method that removes bias in attention calculations. Unlike traditional quantization approaches, it requires no retraining and introduces virtually no runtime overhead, making it deployable on existing models.

The strategic implication is significant: KV cache limits are one of the main constraints on long-context and multi-turn agents. By drastically reducing memory footprint, TurboQuant makes persistent, real-time, and multi-agent systems far more practical—shifting the bottleneck from hardware scaling to software efficiency.

Learn AI in 5 minutes a day

This is the easiest way for a busy person wanting to learn AI in as little time as possible:

Sign up for The Rundown AI newsletter

They send you 5-minute email updates on the latest AI news and how to use it

You learn how to become 2x more productive by leveraging AI

SAP said it will acquire Reltio to strengthen SAP Business Data Cloud and make both SAP and non-SAP enterprise data AI-ready. The strategic point is the data layer: SAP is positioning trusted, unified, low-latency master data as the backbone for Joule and multi-agent workflows, not just a back-office cleanup project. The release explicitly ties the deal to enterprise-wide agentic AI, with Reltio’s entity resolution, data unification, and MCP support meant to help agents act on cleaner, more interoperable business context. This is one of the clearest March 27 signals that big enterprise vendors see agentic AI as a data architecture problem as much as a model problem.

Salesforce published a behind-the-scenes deployment story showing that its own IT support agents now handle 40% of support cases. The company says that translated into 9,500 automatically resolved cases and $57,000 in savings within the first two months, across an environment managing about 25,000 support tickets per month for 76,000 employees. Strategically, this matters because it is not just product marketing; it is a concrete internal operations example with reported volume, savings, and rollout details around sandboxing, data masking, and least-privilege access. For Agent Pulse, this is a notable real-world proof point that agents are moving from demo mode into measurable enterprise service workflows.

Optimove introduced AI Decisioning Studio, a control layer where marketers can manage and monitor a team of AI agents from one interface. It launched with four coordinated agents focused on journeys, offers, content, and send-time optimization, with shared context designed so each decision informs the others. The strategic implication is that marketing vendors are now packaging agent systems less as one-off copilots and more as orchestrated multi-agent teams that compound performance across campaigns. That makes this a meaningful step toward operationalized agentic marketing rather than isolated AI features.

88% resolved. 22% loyal. Your stack has a problem.

Those numbers aren't a CX issue — they're a design issue. Gladly's 2026 Customer Expectations Report breaks down exactly where AI-powered service loses customers, and what the architecture of loyalty-driven CX actually looks like.

Featured AI Agents

Orloj - Declarative agent infrastructure as code for multi-agent AI orchestration

Handinger - Turn any website into clean Markdown

Agentman - AI agents for your entire medical practice back office

Dzine AI - All-in-one AI image and video generator

Vibe Otter - Build a professional website in just 30 minutes

Nano Banana - Prompt-based photo editing with character consistency

TheLibrarian.io - Your WhatsApp AI sidekick

Blocks - Platform that brings coding agents into your development workflow

What to Watch this Week

🎙️AI on Fire: Real Builders. Real Heat.

Stories from people building AI Agents. Explore all episodes here

Building with AI Agents? Come talk about it.

AI on Fire is the podcast where we speak with founders and builders shaping the agent economy.

🏆 The Leaderboard Never Sleeps

The global ranking of AI agents is shifting every day. Who’s on top? Who just dropped?

🗺️ The Map of AI Agents (Live & Growing)

We’re charting the entire AI agent ecosystem — thousands of options across categories.

Your next agent is already on the map.

How'd we do? |

Reach 23,000+ Readers:

Newsletter is read by VCs, founders, engineers, managers and tech professionals.

Reply